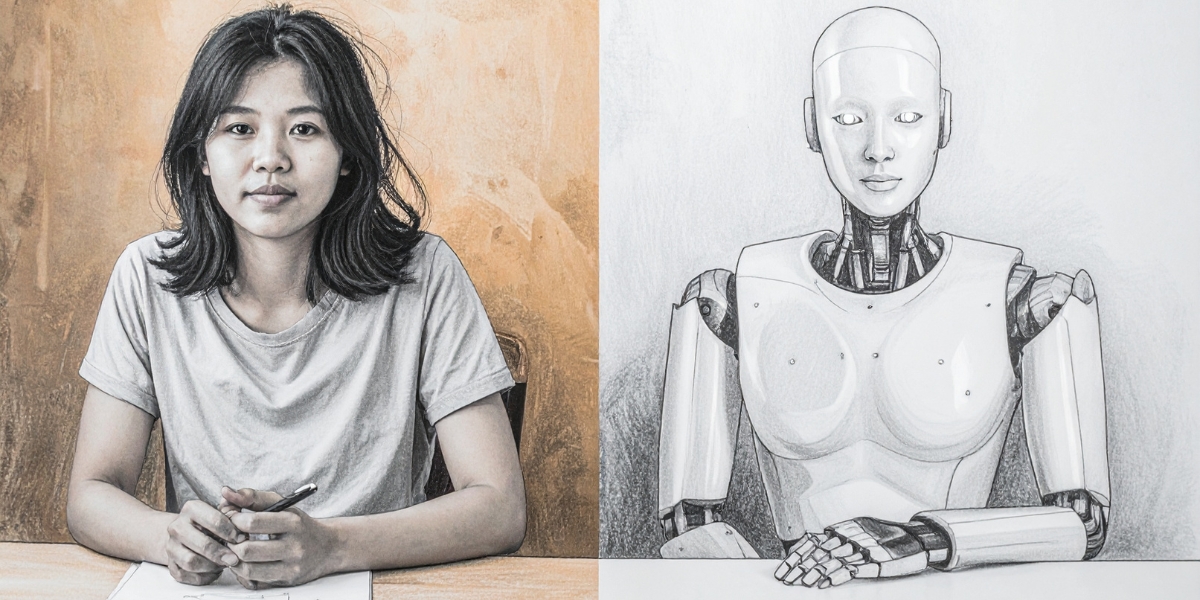

Across knowledge professions, something subtle but structural is happening. Generative AI has made it possible to write, design, code, and analyze faster than ever. The question is no longer whether AI will replace jobs. A more uncomfortable question is emerging: Where does the real value of our work actually lie?

Adobe recently became a symbol of this shift. Despite strong financial performance, its stock faced heavy pressure. The anxiety was not about revenue, but something deeper. If high quality creative output can now be generated without years of tool mastery, where does value sit in that ecosystem?

This is not simply a story about a company. It is a story about value relocation.

When Making Is No Longer Scarce

For decades, professional creative work required both expensive tools and long training. Mastery of execution was the gatekeeper. The ability to manipulate complex interfaces and produce polished outcomes was rare. Scarcity lived in skill.

Generative AI is driving the democratization of creativity. It lowers the barrier to entry dramatically. High quality output can now be produced with far less technical expertise. Options can be generated almost instantly. The cost of experimentation approaches zero. When everyone can produce something convincing, production itself stops being the differentiator. Scarcity moves elsewhere.

The Bottleneck Shifts Upward

If generating options becomes easy, choosing among them becomes harder. Teams now face a different kind of pressure:

- What problem are we actually trying to solve?

- Which of these many outputs truly fits our context?

- What needs to change so that the result is not merely plausible, but right?

In this new landscape, judgment is the primary bottleneck. Execution used to absorb the budget; now, deliberation does.

When making is cheap, the cost of a wrong direction becomes the greatest expense.

The Same Shift Is Happening in UX research

Research is often described in terms of methods and deliverables: interviews, surveys, testing sessions, and reports. AI is already streamlining much of the operational work. Transcription is instant. Initial coding can be automated. Large volumes of qualitative data can be summarized in seconds.

But efficiency does not automatically produce clarity. In fact, it exposes something that was always true. The difficulty of research has never been collecting information. It has been deciding what that information means and what to do next. Research does not create value by organizing data. It creates value by improving decisions.

Why This Shift Is Especially Visible in Japan

In Japan, user behavior is shaped by context that is rarely verbalized directly. Factors such as politeness, group alignment, and situational awareness deeply influence communication. What is left unsaid often carries more meaning than what is expressed openly.

The real work lies in interpreting subtle signals: a pause, a qualified agreement, or a slight hesitation. These details can signal constraint, discomfort, or quiet resistance.

For teams outside Japan, those nuances are easy to misread. Data may look positive on the surface while underlying friction remains unresolved. In this environment, interpretation is the core of the work, rather than an added layer.

From Output to Direction

As AI increases the volume of possible outputs, it also increases the number of possible directions a team could take. That expansion of choice raises the stakes of decision making. It has become easier to produce something reasonable. It has become harder to choose what is right.

Strategic UX research sits precisely at that point of tension. Its role is to reduce the cost of poor judgment by clarifying which signals matter and which are merely noise. It surfaces hidden assumptions and connects evidence to action in a way that can withstand scrutiny.

Research Earns Its Place Differently Now

In an environment shaped by AI, research cannot justify itself by process alone. Methods are increasingly accessible. Tools are increasingly automated. What remains scarce is thoughtful interpretation under uncertainty.

The future of UX research is focused on strengthening decisions rather than defending methodologies. It’s about helping teams choose with clarity.

At Uism, we work at this interpretive layer, helping teams navigate complexity and turn ambiguous signals into strategic direction. If you’re looking for a partner to sharpen your decision making in Japan, let’s start a conversation.

Related Articles

As AI offers to generate “synthetic users,” a critical question emerges: can an algorithm ever replace the subtle, unsaid context that only a real human conversation can reveal?

A reflection on how Nietzsche’s philosophy can reshape our approach to AI, pushing us to design tools that don’t just complete tasks, but help users transcend their own limitations.

-5-1.jpg)